The CuSum algorithm - a small review

Texte intégral

Figure

Documents relatifs

In the case of a research prototype using an imperfectly matched lens array and LCD panel, the displayed pattern may be interpolated to achieve sub-pixel alignment, with a small

We used linear regressions to test how these metropolitan form metrics relate to transportation indicators that we use as proxies for sustainable metropolitan development—CO2

The ECOMORE-CNM yearly Dengue Major Outbreak Sensor, based on the Surveillance R-package is a highly performant tool to predict major incoming dengue outbreaks in Cambodia.. Its

Spectral line removal in the LIGO Data Analysis System (LDAS). of the 7th Grav. Wave Data Anal. Coherent line removal: Filtering out harmonically related line interference

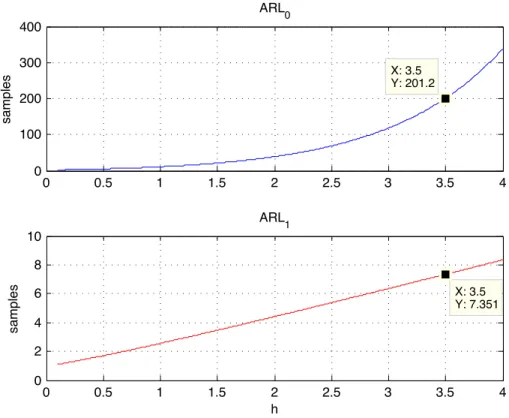

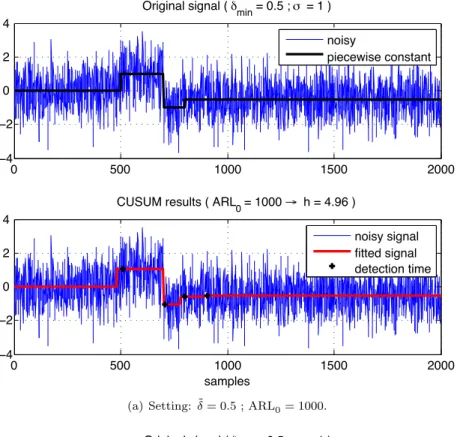

In particular, we shall show that the CUSUM is optimum in detecting changes in the statistics of Itô processes, in a Lorden-like sense, when the expected delay is replaced in

As shown in Fig.1, firstly, the point cloud expression of the scene is constructed based on depth data, and the down sampling is performed to the point cloud by using

Frequency-Dependent Peak-Over-Threshold (FDPOT) puts special em- phasis on the frequency dependence of extreme value statistics, thanks to Vector Generalized Additive Models

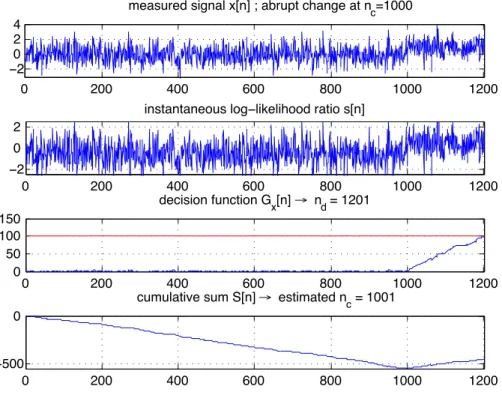

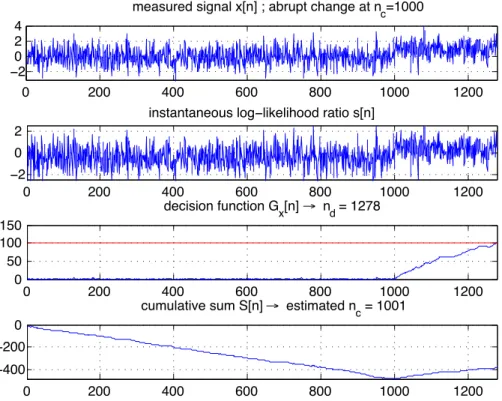

2. The rupture is detected, as the variance is proportional to the distance of the signal from its average. ! 1 length determines the noise rejection capabilities of