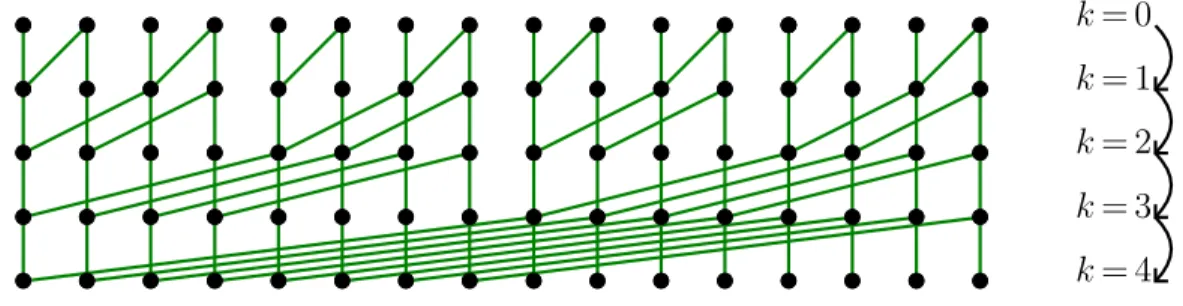

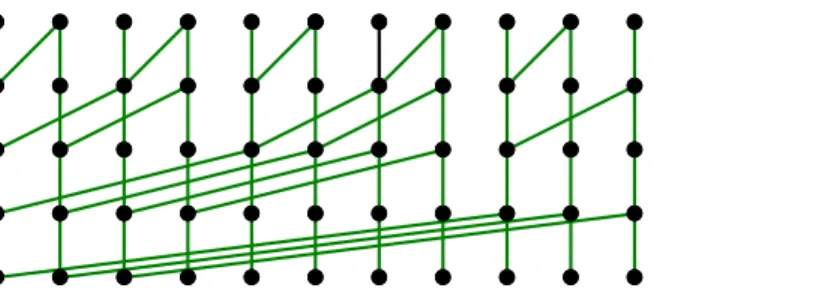

Multi-point evaluation in higher dimensions

Texte intégral

Figure

Documents relatifs

U NIVARIATE INTERPOLATION USING CYCLIC EXTENSIONS One general approach for sparse interpolation of univariate polynomials over gen- eral base fields K was initiated by Garg and

This is why Sedgewick proposed in 1998 to analyze basic algorithms (sorting and searching) when dealing with words rather than with “atomic” keys; in this case, the realistic cost

L’énonciateur est un être discursif qui correspond au(x) contenu(s) exprimé(s) dans un énoncé. Ce n’est pas une personne mais un être abstrait qui s’exprime

Deux méta-analyses plaident en faveur de l’utilisation d’une APD au décours de la chirurgie (dont la chirurgie thoracique) [232, 233]. La méta-analyse de Ballantyne et

We consider new types of resource allocation models and constraints, and we present new geometric techniques which are useful when the resources are mapped to points into a

Abstract: In this paper we consider several constrained activity scheduling problems in the time and space domains, like finding activity orderings which optimize

curse of dimensionality, multidimensional function, multidimensional operator, algorithms in high dimensions, separation of variables, separated representation, alternating

Le zinc joue un rôle de cofacteur pour de nombreux enzymes et intervient ainsi dans de nombreuses fonctions comme le métabolisme des nucléotides, la synthèse des