JEGA: a joint estimation and gossip averaging algorithm for sensor network applications

Texte intégral

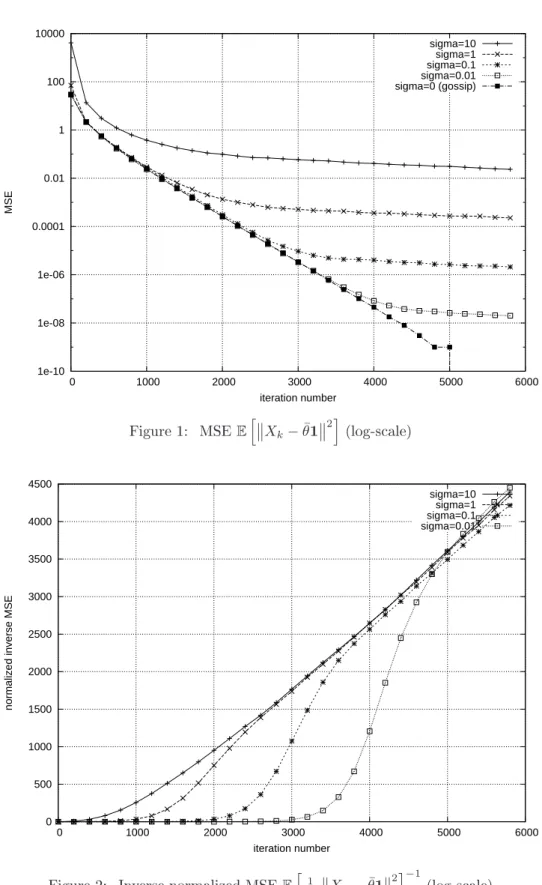

Figure

Documents relatifs

P2P stochastic bandits and our results In our P2P bandit setup, we assume that each of the N peers has access to the same set of K arms (with the same unknown distributions that

Assuming only that the maximal number of outliers is given, the proposed approach uses connectivity measurements in addition to a mobility model to address the localization

We propose in this paper a classification of such methodologies applied to environmental sensors, based on four groups of categories capturing both network architecture and

While anonymity of processes does not affect solvability, it could increase decision times in view of our quadratic time approximate consensus algorithm for a coordinated network

For each of these algorithms, the Perron vectors are constant for large classes of time-varying topologies: when the communication graph is permanently bidirectional this holds for

Previous works on the subject either focus on synchronous algorithms, which can be heavily slowed down by a few slow nodes (the straggler problem ), or consider a model of

We start by proving that, in a classical gossip averaging algorithm as proposed in [20], if some of the values exchanged by peers are replaced by random val- ues and, if later,

As shown in previous sections, when temperature decreases, the wireless link quality improves and the using of smaller power transmission may be possible to save energy