Hierarchical Topic Models for Language-based Video Hyperlinking

Texte intégral

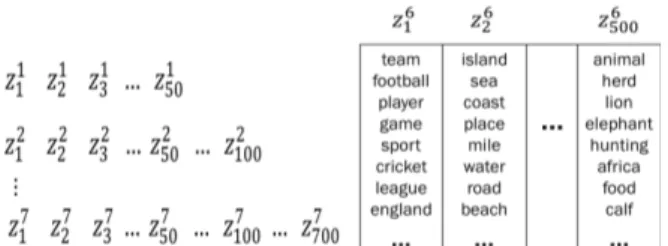

Figure

Documents relatifs

By acquiring a topic termi- nology from a thematically coherent corpus, language model adaptation is restrained to the sole probability re-estimation of n-grams ending with

The basic models, PLSA and LDA are designed for one-type data and advanced models are designed for modeling multi-type data, especially for image modeling and

The region-topic model provides an opportunity to repre- sent the meaning of words through grounded features: words can be represented as a vector whose dimensions are region

Based on this, new resources are mapped to latent topics based on their content in order to recommend the most likely tags from the latent topics.. We evaluate recall and pre-

The key idea for parallelizing the sampler in the multicore setting is that the global topic distribution and the topic- word table (which we will refer to as state of the

In order to extract the distribution of word senses over time and positions, we use topic models that consider temporal information about the documents as well as locations in form

Given the above schemes for feature extraction on the training and test datasets, we use the computed feature vectors as inputs to a classifier created for each (language,

Topic models, implemented using Latent Dirichlet Allocation (LDA) [2], and n–gram language models were used to extract features to train Support Vector Machine (SVM) classifiers