Unsupervised gene network inference with decision trees and Random forests

Texte intégral

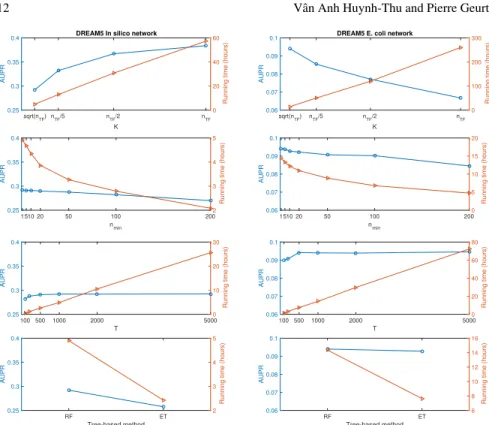

Figure

Documents relatifs

The aim of these experiments was to observe and quantify the possible improvement at the final step of a Question Answering prototype (i.e. at the answer selection step), as

– Use a vote of the trees (majority class, or even estimates of class probabilities by % of votes) if classification, or an average of the trees if regression.. Decision Trees

Then, the efficiency of important variables detection is analyzed using nine data simulation models designed for both continuous and nominal data, and CUBT is compared to

Recent approaches propose using a larger class of representation: the class of ra- tional distributions (also called rational stochastic tree languages, or RSTL) that can be computed

If we compare decision trees obtained in two models, we see that using the MAXMIN pre- clustering algorithm significantly reduces the number of checks needed to make

The results include the minimization of average depth for decision trees sorting eight elements (this question was open since 1968), improvement of upper bounds on the depth of

Main results Theorem Given a d-DNNF circuit C with a v-tree T, we can enumerate its satisfying assignments with preprocessing linear in |C| + |T| and delay linear in each

• Extending the results from text to trees • Supporting updates on the input data • Understanding the connections with circuit classes • Enumerating results in a relevant