Local Convergence Properties of Douglas–Rachford and Alternating Direction Method of Multipliers

Texte intégral

Figure

Documents relatifs

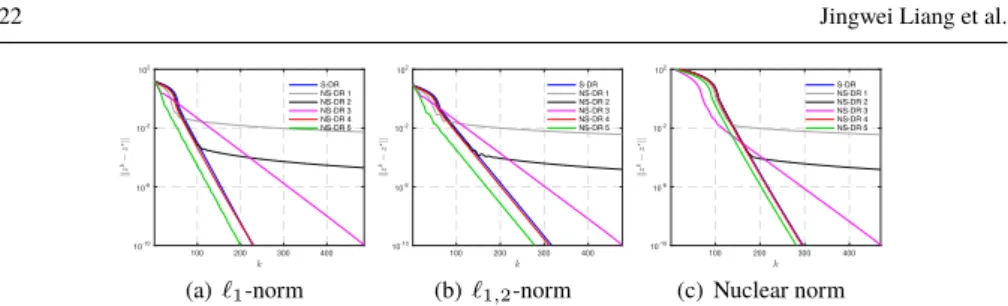

The update steps of this algorithm inherits the principle of ADMM (i.e., alternating between the resolution of a primal and dual convex optimization problems), but at each

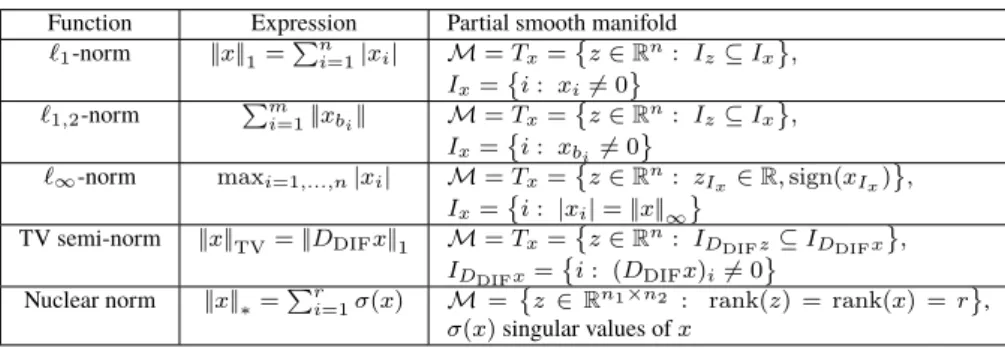

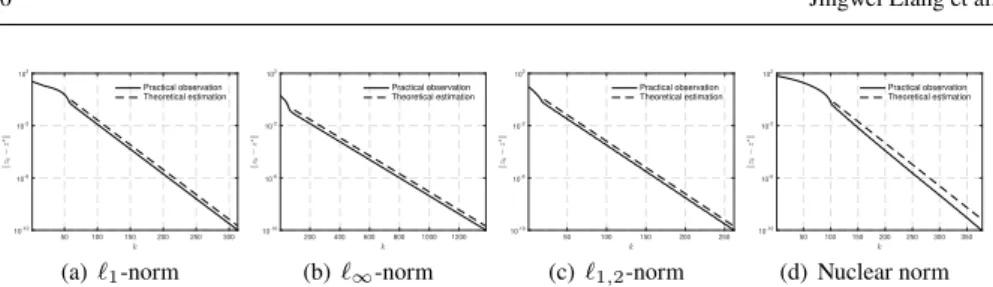

– Finite Time Activity Identification Under the assumption that the non- smooth component of the optimization problem is partly smooth around a global minimizer relative to its

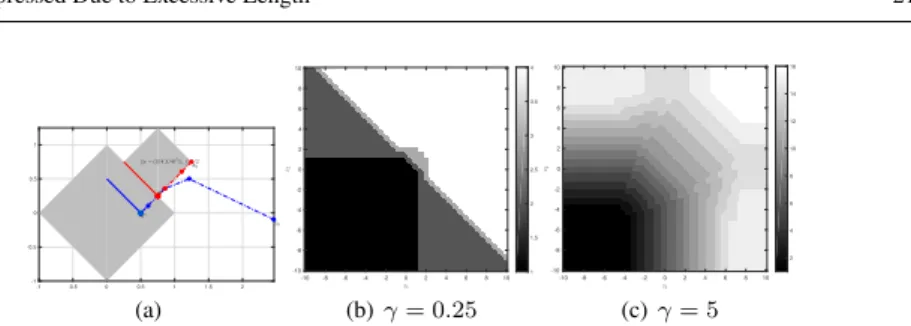

The goal of this work is to understand the local linear convergence behaviour of Douglas–Rachford (resp. alternating direction method of multipliers) when the in- volved

Moreover, when these functions are partly polyhedral, we prove that DR (resp. ADMM) is locally linearly convergent with a rate in terms of the cosine of the Friedrichs angle between

Keywords: Douglas–Rachford splitting, ADMM, Partial Smoothness, Finite Activity Identification, Local Linear Convergence..

We will also show that for nonconvex sets A, B the Douglas–Rachford scheme may fail to converge and start to cycle without solving the feasibility problem.. We show that this may

In this paper, we consider the complementary problem of finding best case estimates, i.e., how slow the algorithm has to converge, and we also study exact asymptotic rates

We propose a new geometric concept, called separable intersec- tion, which gives local convergence of alternating projections when combined with Hölder regularity, a mild